Server setup

- http

Caching can be done on multiple levels in the request-response chain like on the client, cdn or proxy. Depending on the setup you have more or less caching options (and other capabilities).

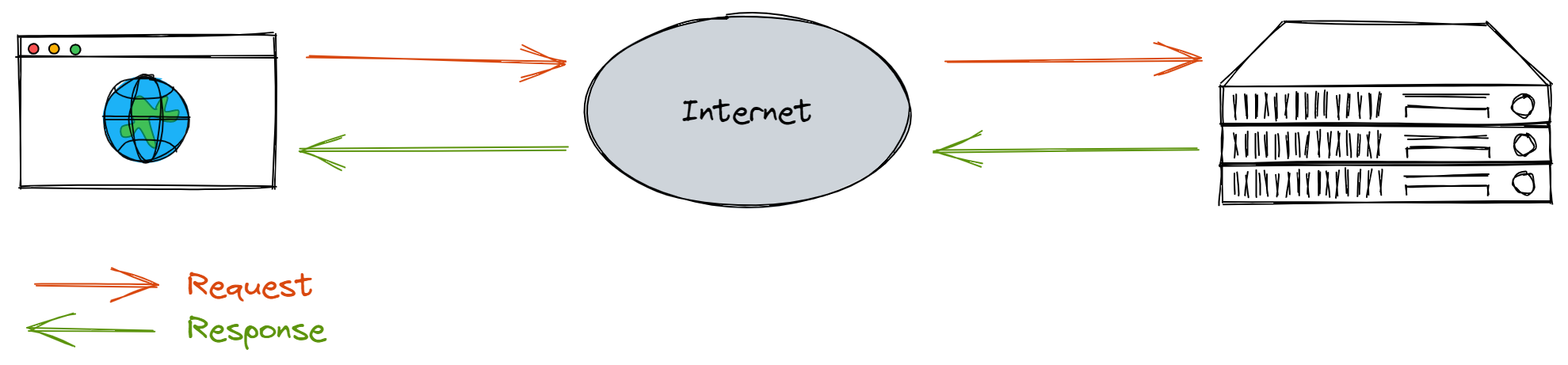

Client-Server

The most simple setup is when all requests are handled by the webserver itself. Which most likely could means:

- higher latency (time to travel a distance) since the origin server might be located for away from the client;

- higher load on the server;

- more security risk due to more (direct) exposure.

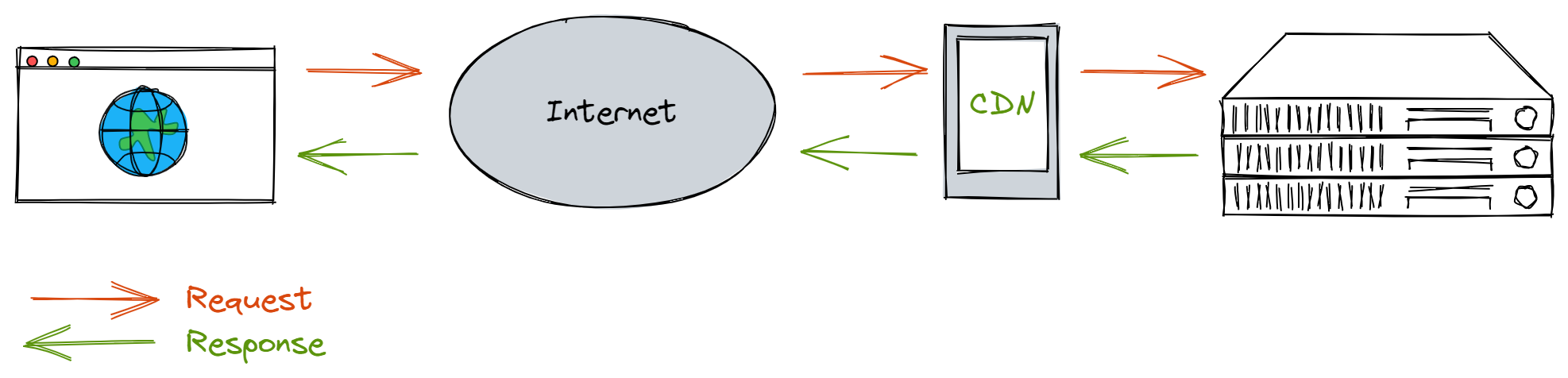

Client-CDN-Server

With a Content Delivery Network (CDN) the content is cached at multiple geographical locations which will decrease the distance thus reduce the latency and load on the origin server. To make sure the content is not stale HTTP headers are important to determine the validity of the cached content.

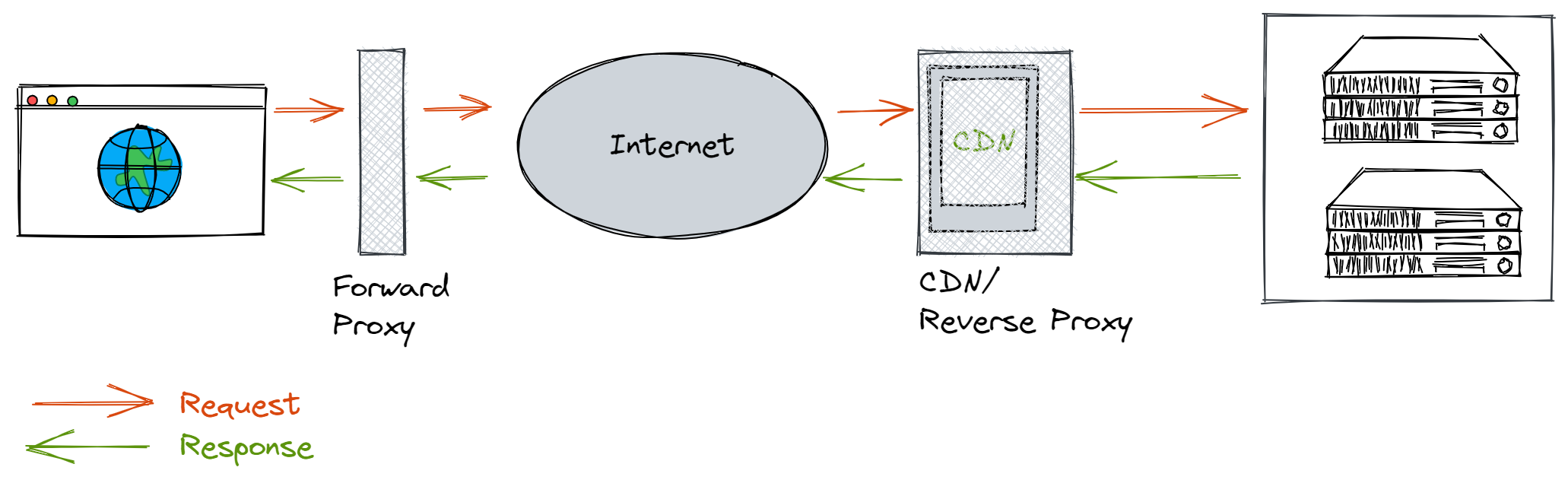

Client-Proxy-Server

As a next step proxy servers can be added to the setup, sometimes even in combination with CDN capabilities. Also referred to as Edge servers these CDN servers are used to cache content retrieved from your origin server. The name is related to the fact that these servers are at the edge of the network and are geographically close to the requesting client unlike the origin server.

Forward proxy server

Sits between the client and the internet and handles requests from and to the internet (tunnel, gateway). It can hide client identity from server and store things like dns, webpages to reduce/control bandwith used by the clients. It ensures that no origin server ever communicates directly with a specific client.

Reverse proxy server

Sits between the internet and the server and takes requests from the internet and forwards them to the server on the internal network. It hides the server identity from clients and ensures that no client ever communicates directly with that origin server. The proxy can perform functions like:

- caching (offload webservers by caching (static) content)

- filtering

- load balancing (distribute the load to servers)

- authentication

- logging

- compression (compress/optimize content to speed up load time)